ts Elliot- – the cocktail party

Category: Style

“art’s true value lies in its ability to not only survive times of change, but to actively drive and lead that change”

Robert Redford

Folks still wonder “why MCP?”. Here is a step-by-step comparison

Not much feels different. And that’s exactly why it confuses people. They expect magic.

I want a list of users. Here’s my pseudo-code way of sorting it out ways of doing it.

Traditional-coder scenario

Human: “I want user data from your system.”

Human goes to the docs

Finds the right endpoint

Sets up auth

Writes code: GET /users

Parses the response and uses it

The human is doing discovery, auth, integration, and parsing.

AI without MCP

Human Prompts: “List users from System X, using this doc [url]”

AI tries to reverse engineer endpoints and payloads

Maybe works, Maybe hallucinates

Human does not get coffee, they have to watch the AI progress and steer it.

The AI is the one reading the docs, but the developer is still doing work.

MCP scenario

Human: “List all users from in a Modal”

That’s it. Human goes to get coffee.

AI checks connected MCP servers

AI asks: “Do I have a tool for this?”

Finds a structured tool like list_users exposed by that service’s MCP server

That tool defines:

- What it does

- What inputs it needs

- How auth works (handled server-side)

AI calls the tool through the MCP client

MCP server executes the call safely

Structured user data comes back

AI keeps going with real data

AI: “Modal with Users is ready for your review”

Human Browsing Web

Human says, “I want to get Users for the [service]”

Human opens Chrome and goes to https://%5Bservice%5D.com

Human click “Users” on the page.

They read list of Users on the page and spams them (or whatever else humans like to do these days.)

You don’t manually open a socket and craft packets. Your browser speaks a standard protocol and every website that follows it “just works.”

MCP is trying to be that layer, but for AI using software tools instead of humans using web pages. The key part is the contract. The AI is not inventing how to call your system. It is using a capability your system formally exposed over a shared protocol.

“The curious paradox is that when I accept myself just as I am, then I can change.”

– Carl R. Rogers

“I am a warrior, so that my son may be a merchant, and his son a poet.”

– John Quincy Adams

Multi-Prompting Makes Multitasking Real

For years we treated multitasking as a skill. A badge of honor. A sign that someone could juggle more than the rest of us. But anyone who has actually tried real multitasking knows the truth: it never works as well as the multitasker thinks. The human brain simply is not built for parallelism. It is built for rapid switching, and rapid switching has real cognitive taxes.

Yet a strange thing has emerged in the age of AI. A new pattern. A new form of workflow. Not delegation, not automation, not parallelization. Something in between.

I call it multi-prompting.

Multi-Prompting as an example

Multi-prompting is what happens when you have several active projects, each one running in near-real time, with the help of an AI system you guide through tight loops of prompting and review. You prompt one project, then the next, then the next. By the time you return to the first, the AI has completed executing the task (in detail). You immediately see the results, assess them while the objective is still fresh in your short-term memory, refine the prompt (or create a new task), and send it back into motion.

The entire loop across multiple projects might take only a few minutes.

The key is that nothing has left your mind. The objectives for all active projects remain in active memory, and the micro tasks are executed almost instantly. You are still steering. Still directing. Still deciding. The system is not replacing your cognition, it is amplifying it by absorbing the tedious layers of implementation. It is doing so without making tiny errors like typos; the fist things that begin to fail when a human multitasks entire objectives.

Why It Is Not Delegation

Delegation is a full transfer of work. You hand something to someone else, they go off and implement it, and you hear about it later. Your mind no longer holds the details. You wait for updates. There may even be days where it never crosses your mind. That bandwidth is completely freed up.

Multi-prompting is the opposite. The work never leaves your head. You retain the objective. The AI takes on the lower level implementation, the same way spell-checking takes on mechanical proofreading. You remain fully engaged. You never stop being the author of the work. You simply stop being the one doing the slowest parts.

The cognitive loop stays intact.

Why Multi-Prompting Works When Multitasking Fails

Human working memory can hold only a small number of active threads at once. Research usually puts the upper bound around four to seven items: https://www.sciencedirect.com/science/article/abs/pii/S0010027704000314

Traditional multitasking forces those threads to fight for attention. We lose details. We forget sequences. We get the order wrong because the interruptions break our mental stacks.

Multi-prompting shifts the burden. The AI holds the intermediate states, the logical steps, the incremental implementation. Your mind only holds the mission. The objective stays crisp because you are not spending your limited working memory rehearsing each substep.

You get to move to the next objective immediately. And when you return, nothing has decayed. The context is still there because the AI is preserving the continuity through rapid iteration.

A New Human Work Pattern

This is not a small shift. The human tech interface has gone through three major phases of labor:

- Humans do the work.

- Humans delegate work.

- Humans direct work.

Multi-prompting quietly introduces a fourth:

- Humans co execute work.

It is not automation because you still drive the objectives. It is not delegation because nothing is handed off. It is more like working with multiple cognitive extensions that each operate at computer speed while you maintain the higher level coherence.

Your mind becomes the conductor. The AI becomes the orchestra.

And suddenly you can work across many projects without losing the plot of any of them.

The Future of Daily Work

In ten years, people will look back at how we worked in 2023 and realize how primitive the workflows were. We had powerful machines but we used them in single threaded patterns carried over from the industrial age. Multi-prompting is one of the first glimpses of a different kind of knowledge work. One where human intention stays front and center, while machines handle the cognitive drudgery that used to slow us down.

We will not call this multitasking. We will not call it delegation. We will probably give it a better name than multiprompting.

But the shift is already here.

One minute of human direction. One minute of machine execution. A continuous loop. And a new concept of our internal thinking flow and what one person can accomplish.

Socratic AI: The debate-based Writing Method to create better content

When asking AI to write articles, I think most people prompt apps to “Write about this…”. They provide some details about what to write, more or less, and then use AI to help with the editing. It’s a kin to having an editor or ghost writer.

I started in the same way, but always felt like I was battling the AI instead of working with it. I’ve come to use it very differently. Not do I love this new method but I learn a lot from the experience each time.

Instead of asking AI to write for me, I use it to think through concepts with me. To have it debate or question my thoughts. To specifically “not write an article” for quite some time until I think we are on the same page. This can sometimes take weeks strewn with small chats with long breaks in between until a new thought spark up again.

This whole approach started by accident when I discovered more personality with GPT 4. One day I got riled up from reading some shallow post. It sparked a mental argument with myself to try and see how “the other side” could come to such a different conclusion. On a whim I gave ChatGPT a chance to give me the other side and it surprised me. It not only delicately agreed with my POV, but it gave another potential position followed by “if you could change the circumstance how would you do it?”

It didn’t just echo my points. It pushed back. It made counterarguments. It sharpened the conversation. I ended up having a long conversation with the AI. By the end of it, I understood my own idea better. I felt like I had a smart, patient thought partner who genuinely got what I was trying to work through. It was mind blowing.

That’s when it hit me. If GPT can do this with abstract ideas, why not use the same kind of back-and-forth to help me write?

That’s how this process was born. I’m not starting with a goal to create a draft. I’m starting with a goal to think through a conversation and see where it leads.

What I’ve found feels like a modern revival of the Socratic dialectic. It gives me a space where I can toss out half-formed thoughts, question assumptions, test ideas, and refine them through dialogue. Some go nowhere, but all end with a better grasp of my original thought or counter thoughts.

I keep all my writing in a single project so GPT has context from everything I’ve written or said before. When I want to explore something new, I open a fresh thread and say:

“I don’t want anything created yet. I want to jot thoughts down and then I’ll let you know if I’m ready to create something or if I want to dig deeper.”

Then I just post whatever comes to mind. No outline. No goal. Just the original vapor of a concept. Sometimes I ramble. Sometimes I loop back or take side paths. Sometimes I ask:

“What do you think?” or “Is there a counterpoint I’m missing?”

And it responds. Not with a final draft, but with friction. With momentum. With more angles to explore.

I think best in conversation. I rarely find clarity in a vacuum. Often I will argue a point with someone and walk away with a whole new version or perspective on my belief. Often, I push on ideas, debate myself, and churn.

So when GPT became more conversational, it clicked. It felt like I finally had a thinking partner who didn’t judge, remembered everything, and has no distinct side. The result isn’t just better writing. It’s better thinking.

Once the idea has been explored enough, I ask GPT to turn the thread into an article. Since it has been there for the full conversation and already knows my tone from past articles, the first draft usually comes back pretty close to what I want.

It is never final, but far more inline and final than anything I have ever tried to create with AI before.

Once I am done I end the thread with my final post in my project:

“Here’s the one I actually used. Save this to memory. No more feedback or follow up needed.”

Over time, it learns me. My tone. My rhythm. The kinds of lines I keep, the ones I cut, and the ones I repeat for emphasis. It becomes both a mirror and a co-writer.

So no, I don’t start by asking GPT to write something. I start by asking it to listen. To push back. To help me think through things better. This isn’t AI-assisted writing, it is AI-assisted dialectic.

Good, Fast, AND Cheap: How Great Founders Achieve the Impossible

How can you break through the “Pick Two” rule of the Iron Triangle? Let’s start with an experiment…

Try This

- Find something near by. A cup, a notebook, a shoe.

- Now start a timer for 15 seconds.

- Describe what you see. Either out loud or write it down. When the timer ends, stop.

Done? Good. Put that aside for a second.

Next, Try This

- Start a new timer for 60 seconds.

- Look at that same object from the last test again.

- Try your hardest to keep describing it. Notice things you may not have considered last time. Push yourself to find more. Don’t stop early, even if you think you’re done.

- When time’s up, compare the two.

What changed?

Most people will find that even if they were confident after 15 seconds they described it well, with the 60-second description they went deeper. Found more texture, more color, more shades (figuratively or literally). Of course, nothing about the object changed. What changed was your focus.

This is where quality comes from not from pure blood-sweat-and-tears effort, or from adding more things to do (more objects to look at), but from choosing where to pay attention and staying there.

Let’s try one more thing

- Pick a single part of that same object. A seam on a shoe. The rim of a cup. The spine of a notebook.

- Look only at that.

- Look as long as you can. I think you will see the point.

It’s smaller. More focused. And what you thought was a nothing, has many qualities of its own. Now there’s less to process. Less ambiguity.

Did you do more with less time, or less with more time? Was there less quality when you zoomed in? That whole Iron Triangle is a fallacy. Speed, No. You gained clarity. You went deeper, faster.

“Good, fast, cheap pick two” is a great saying. I like it. I’ve used it to help me make decisions. But like with all tips, it is not the whole story. If you have 20 things to do, you will need to make sacrifices, but it is better to reframe it: In the same amount of time how many of the 20 can I do if I cut 10 of them from the list. I bet then you can achieve good, fast, and cheap. Maybe it should be an Iron Square: Good, Fast, Cheap, and Doing Everything. All 4 is what is impossible.

Focus works like that. It’s a cycle. Narrowing scope increases speed. Committing attention increases quality. The product of both will help you do it with any other lens like cost and complexity.

This isn’t just an observation trick. It’s how great startups win.

Most people think building something great takes time a lot of time. Or money. Or a big team. But that’s because they misunderstand where quality actually comes from.

It’s not time. It’s not budget.

It’s focus.

Just like the object you examined, a product isn’t “done” because you’ve spent weeks on it. It’s great because someone paid sustained attention to exactly the right part of it.

That’s what great founders do. They don’t cut corners. They cut scope. They shrink the surface area until it fits their resources and then they go deeper on it than anyone else would.

When you have only one thing to solve, you don’t get analysis paralysis. You decide quickly because you’re not drowning in competing priorities. You can scope tightly and say, “Let’s find some smart ways to hack this with other tools or techniques,” because you’re not burdened by the whole picture you just need to nail this specific piece.

This is how speed and quality stop being tradeoffs. Focus increases both. Less guessing. Fewer distractions. Faster feedback. Better outcomes.

A big company might have a thousand people working on a thousand things. A great startup gets 3 people working on the right thing for just long enough to make it undeniable.

That’s how you get good, fast, and cheap all at once.

It’s not impossible. It’s just focus.

“My dear Degas, poems are not made of ideas, they are made of words.”

– Stéphane Mallarmé,

Start Coding from Your Phone for $15/mo

Last week I updated some functionality in my repo’s codebase, with my iPhone, while drinking beer, in the jacuzzi. It was glorious. With a simple-to-setup environment you can code from anywhere and keep your AI on track—whether you’re walking your dog or using the bathroom.

Here’s how:

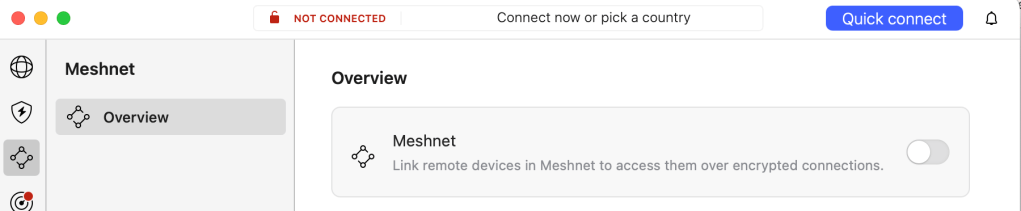

Step 1: Set Up a VPN with Meshnet on your Laptop and Phone

Not only is having a Virtual Private Network running on your devices good practice for privacy and security, but NordVPN comes with a free Meshnet feature. Meshnet lets your devices securely talk to each other from anywhere in the world—no complex setup, no extra cost.

To get started, turn on Meshnet on your laptop or desktop.

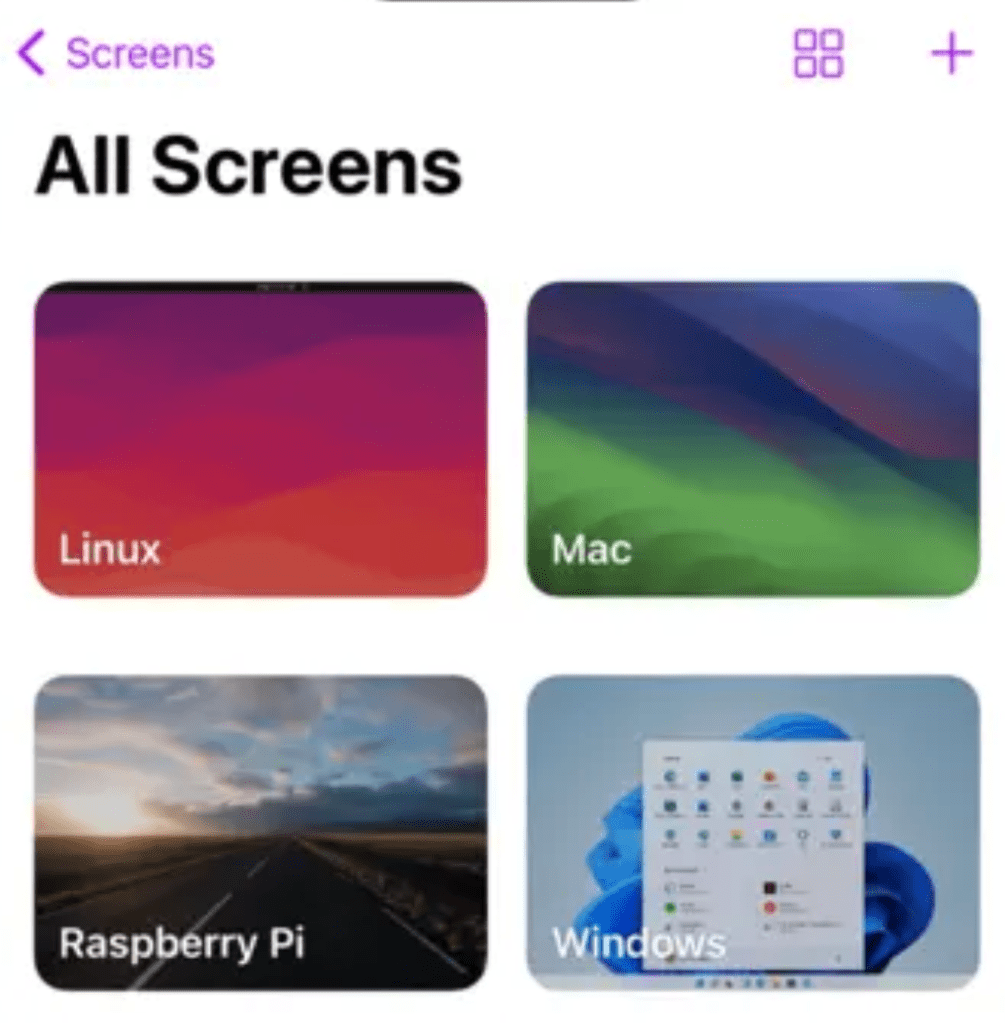

Step 2: Install a Remote Desktop App

Download a remote desktop app that lets you connect to a specific IP or device. I use Screens (a one-time $3.99 purchase – https://apps.apple.com/us/app/screens-5-vnc-remote-desktop/id1663047912). It’s been the most reliable one I’ve used so far. I tried a few free options first, but the $4 was well worth it. It works with multiple screens—I have 4 monitors on my office setup and all display perfectly in Screens.

Once connected, I can launch my code editor, type my objective, and guide the AI, all from my phone. I occasionally have to click “accept” or “continue,” but I rarely need to go back to my computer.

Being able to multitask at my desk has been amazing, but making real progress while living my life away from the desk is game-changing.